There is a new security threat related with AI coding, it is called Slopsquatting, a type of security vulnerability exploiting AI-first development and hallucinations. If you use AI in your workflow and leverage editors such as Cursor, Windsurf and similars, you are vulnerable to this type of threat and I am here to tell you more about it and how to protect yourself from it.

First let’s shortly talk about the cultural shift that is leading to an increased exposure to this kind of vulnerability and what is the underlying cause that makes this threat so dangerous.

Replacing Developers With AI

A growing number of people, especially non-technical ones often write posts on social media such as “AI writes 90% of our code” or “AI will replace junior developers in X years”, even CEOs from companies such as Meta, Google and OpenAI promote this kind of narrative.

Many modern inventions such as factory robots, compilers and calculators

fundamentally transformed the way we work, their behavior is predictable and

deterministic, meaning that for a given input you can expect the same output.

If you type 2+3 in your calculator, it will always compute 5, but this is

not the case for generative AI as it is fundamentally based on

statistical algorithms whose output is non-deterministic.

Software development using agents and AI coding editors quickly gained popularity and an increasing amount of people often compare AI with compilers, the main problem with this kind of comparison is that compilers are deterministic tools, while AI is not.

Large Language Models (LLMs), the underlying technology of generative AI, are sophisticated tools combining multiple computational models, such as neural networks and deep learning, developed in Machine Learning (ML), a field of study of AI concerned with the development of statistical algorithms that can learn from data.

To simplify, LLMs are essentially very big neural networks that are trained using enormous amounts of data. The way an LLM works is to accept an input text, also called prompt, and predict the next words, this generative process is based on statistics and it can lead to unexpected results.

AI Hallucinations

Take any AI model and start asking it how many times a letter appears in a given

word and try it multiple times. Here is one response I got from GPT-4o mini:

Prompt: can you tell me how many letters p are in the word pineapple?

Response: There are two “p” letters in the word pineapple.

This is the non-determinism of LLMs in action, if you keep asking the AI to count letters in a word, you do not have a guarantee that the result is correct everytime, this demonstrates how the AI can give the wrong answer even for such a simple prompt. This type of behavior, also known as AI hallucinations can be dangerous when applied to more complex tasks.

Let me give you another example of AI hallucinations applied to programming.

Here is a fragment of AI generated code while I was experimenting with the

builtin jsonrpc package of the Go programming language:

import (

"net/http"

"net/rpc/jsonrpc"

)

func handler(w http.ResponseWriter, r *http.Request) {

jsonrpc.NewServerCodec(r.Body, w)

}

This code doesn’t compile, the parameters used in the function NewServerCodec are incorrect!

The official package documentation

says that the function accepts a single parameter of type

io.ReadWriteCloser but the AI just used two parameters of the wrong type.

This error was easy to detect thanks to the compiler and static types, but this would have been a lot harder to detect if we used a dynamically typed language.

This is just another small example of AI hallucinating and it shows the limitations of LLMs and how they do not really know how to think, they are just very sophisticated text predicting tools, they are not truly intelligent.

Hallucinations are actually very common because of the statistical nature of LLMs, more advanced models nowadays use reasoning algorithms that simulate a chain of thought before generating an immediate answer, this greatly improves the quality of the responses, but they do not solve the problem of hallucinations completely.

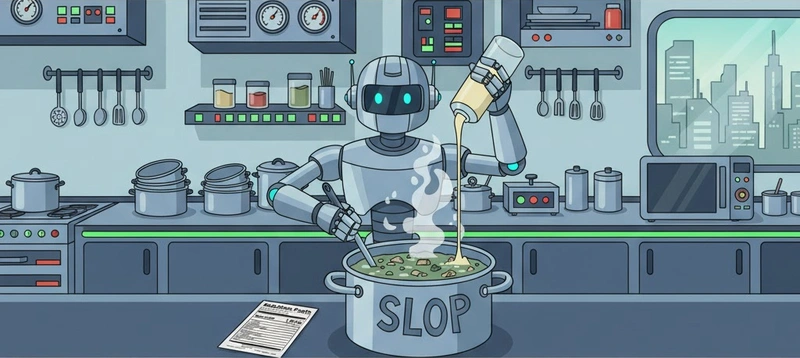

AI hallucinations are also called AI slop and they represents the main building block exploited by Slopsquatting.

What is Slopsquatting?

Slopsquatting is a type of cybersquatting, a practice that consists in registering non-existent package names that a Large Language Model (LLM) may accidentally generate.

Here’s how it works:

AI agents, when prompted to solve a coding task, they might “hallucinate” the

name of a dependency that either doesn’t exist or is a typo of a legitimate one

(a.k.a. typosquatting).

For example, if you are developing using Node.js it might suggest installing

react-js or raect instead of the official package react.

Malicious actors can then register these subtly altered or non-existent package names. When a developer, relying on the AI’s suggestion, tries to install the package, they inadvertently download the malicious version. This malicious package can then execute arbitrary code, steal sensitive data, or compromise the entire development environment.

The danger of Slopsquatting lies in its insidious nature. Developers often trust AI recommendations implicitly, assuming suggested package names are correct. Subtle typos make deception hard to spot. This can quickly become an issue as developers might spend less time reviewing code due to time pressure or an overestimation of the AI capacities, leading to a higher chance of introducing slop in the codebase.

How Do I Prevent Slopsquatting?

Preventing it can be tricky as we cannot fully control the output of LLMs due to their underlying statistical nature, what we can do is instruct the AI with richer prompts and integrate additional quality checks in our workflow:

- Be skeptic of all AI generated code and review it fully

- Verify package names against official documentation or trusted repositories

- Use dependency scanning tools to detect malicious packages and typosquatting attempts

- Consider using private package registries for internal projects

- Use static type checkers and CI/CD to validate code

- Set the AI agent to planning mode and always verify it step by step

- Sandbox your environments to limit the damage

- Limit permissions of your AI agent only to the tools it requires to fulfill a task

- Most importantly: educate your colleagues about AI hallucinations, Slopsquatting and typosquatting.

Conclusions

While AI offers transformative potential for software development, emerging threats such as Slopsquatting highlight the perils of blindly relying on it without any supervision.

Just as you wouldn’t use an LLM to sort a list of numbers when efficient algorithms exist, delegating all coding tasks to this technology without critical oversight might be considered an incorrect choice.

Things will certainly improve in the future and AI might become more reliable, but for now, let’s not forget that LLMs are still based on statistics, the risk of hallucinations is always present and there are some bad people out there taking advantage of this.

Stay safe!

Did you know about Slopsquatting before? I would love to know more about your experience with AI-first development.